Achieve Lower Data Center PUE

PUE (power usage effectiveness) describes how well a data center delivers energy to IT load and supports it. PUE can be leveraged to identify opportunities to improve operational efficiency within the data center.

Achieve Lower Data Center PUE

PUE (power usage effectiveness) describes how well a data center delivers energy to IT load and supports it. PUE can be leveraged to identify opportunities to improve operational efficiency within the data center.

What is PUE?

Power usage effectiveness (PUE) is a data center metric developed by The Green Grid Association (TGG) in 20071 and defined as the ratio of the total facility energy to the IT equipment energy.

While PUE does not measure the computational efficiency of the IT load, it can help identify where power is utilized to improve data center energy efficiency.

Measuring PUE starts with understanding where power is being used in the data center

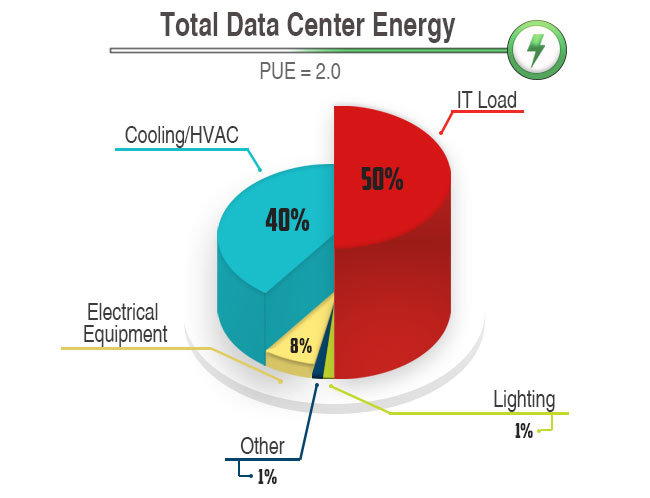

Let’s assume the PUE in your data center was calculated to be 2.0.

50% of its energy would be consumed by IT equipment and 50% by the overhead associated with running the data center and supporting the IT load.

Every watt used for IT load will require an additional watt of overhead. Figure 1 depicts a hypothetical breakdown of the total data center energy consumption.

In the PUE calculation above, the majority of the power being used to support the IT load comes from cooling and HVAC. The rest of the energy is being used by electrical equipment, lighting, and other sources, such as fire suppression systems and security. This means the biggest gains can be made by decreasing the energy used for cooling. The next best area to optimize is the power distribution infrastructure.

Operation cannot improve without an understanding of the current state of the data center’s energy efficiency. With proper PUE calculation, the least efficient areas of the data center can be targeted and optimizations evaluated. This requires taking specific measurements throughout the data center.

Figure 1: Total Data Center Energy Breakdown (U.S. Environmental Protection Agency, 2007)

How to measure current PUE & data center energy consumption

TGG defines a basic (Level 1), intermediate (Level 2), and advanced (Level 3) measurement for PUE calculations2. All three levels require the total facility energy to be measured at the utility input, although each level recommends additional measurement points and requires different IT load measurement.

Level 1

A basic indication of the data center PUE by means of measuring the IT load at the Uninterruptible Power Supply (UPS) outputs.

Level 2

A more accurate data center PUE figure by measuring the IT load from the Power Distribution Unit (PDU) outputs.

Level 3

The most accurate data center PUE figure by measuring the IT load at the input of the IT equipment. This can be accomplished through metered rack PDUs.

Additional metering locations can provide more insight into your data center’s energy efficiency and used to predict the effects of various changes. Beneficial locations would be on the outputs of automatic transfer switches (ATS), inputs of UPS, or inputs of mechanical equipment. Branch circuit monitoring can also provide power usage at the rack level. Measuring at these different locations not only provides additional granularity for insight but can be used as inputs for a computational fluid dynamics (CFD) model.

A CFD model uses numerical methods and algorithms to provide an accurate picture of the cooling capabilities of a data center as well as opportunities for improvement. This includes determining where hot spots have been created, where hot/cold air is mixing, and how to right size the cooling. It reviews power usage, equipment details and airflow measurements on perforated tiles. Once complete, it’s much easier to identify cooling and airflow management improvements.

Tips to lower data center Power usage effectiveness & improve efficiency

| Design a floor plan to optimize cooling |

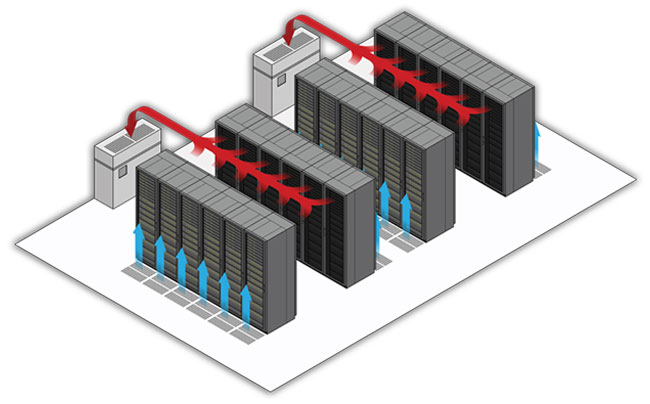

When your layout allows hot and cold air to mix, it’s more difficult – and takes more energy – to cool the data center. If a CFD study has been done, you’ll be able to identify key problem areas, and with a few basic strategies, you can isolate hot and cold air to optimize cooling throughout the data center.

- Use a hot/cold row layout. Arrange the rows of racks in an alternating hot row/cold row configuration. With the rows of racks placed in a back to back configuration, the hot air exhaust of the equipment is separated from the cold air used by the equipment. It is important to keep all perforated tiles in the cold row. This maintains the separation of the hot and cold air.

- Use Blanking panels and grommets in cabinets and to seal off any holes in the data center floor and maintain proper airflow. Brushed grommets can be used to seal any holes in the raised floor for cable cutouts.

- Eliminate under floor obstructions. Any under floor cable tray should be routed in the hot rows and not routed directly in front of the Computer Room Air Conditioner (CRAC) units.

- Use hot or cold aisle containment. CFD studies have shown the end of aisles and the top of cabinets are the most prevalent location for mixing of hot and cold air. This mixing can be eliminated by using a containment system (options vary, and you’ll want to choose the best for your space). For instance, cold aisle containment provides the ability to do partial containment, which creates barriers at the end of the rows but not at the top of the racks.

- Increase inlet temperature to the racks. In 2008, the American Society of Heating, Refrigerating and Air-Conditioning (ASHRAE) expanded the recommended temperature range for IT equipment6 from 77 degrees F to 80 degrees F. Raising the required equipment inlet temperature will allow CRAC unit supply temperature to be increased and provides easier use of economizers.

- Make use of economizers and free cooling. Using outside air to cool creates significant reductions in HVAC energy. Learn more about Mitsubishi Electric's fully-assembled, packaged direct expansion cooling system (DX-P).

| Choose an energy-efficient UPS for your data center |

The electrical infrastructure is the second largest overhead associated with the data center. The distribution system can be optimized by making runs as short as possible, bringing higher voltages to the equipment and using more efficient equipment, all of which minimize resistance and power losses. Given the integral nature of a UPS for your data center, it makes sense to choose a high-efficiency model.

While old UPS installations use transformers and large filters due to 6 pulse and 12 pulse converter sections, new technology has allowed UPS systems to become more efficient and create less heat. The latest advancement (the use of silicon carbide (SiC) transistors) allows for operational efficiencies up to 98% (from a previous efficiency of 90% to 95%).

The elimination of transformers increases efficiencies and reduces cooling requirements, and thus upgrading your UPS can have a major impact on your data center PUE.

| Install energy-efficient lighting |

Efficient lighting can play a large part in lowering the PUE, even though it makes up only a small percentage of the total load. The goal is to have the lights off when not needed and use less energy when they are. LED bulbs, motion sensors, and lighting control are key components to optimize lighting.

LED lighting (particularly in conjunction with a central power-conversion module) will use less energy than the common fluorescent lighting typically found in a data center, eliminating electrical losses and decreasing heat.

You may also want to consider establishing a sensor network tied into the central control system that dynamically changes the lighting so it’s only on where the technician is working. This centralized control system can also collect data for insight into energy usage.

| Consider all updates together to balance data center PUE |

Siloed improvements in efficiencies can result in a higher PUE. If updates are not balanced (for example, failing to adjust cooling to account for a more efficient UPS or computing equipment that uses less energy), you won’t see a positive impact on your data center’s PUE. Your infrastructure updates should work in concert so that overhead energy can decrease when IT load decreases. If your PUE is still high after making some updates, it may be that you’re not using your conserved energy efficiently.

Machine learning and neural networks may be able to help you more accurately analyze a complex infrastructure by looking at the systemic impact of a single change. Establishing governance of best practices will help lower PUE and maintain efficiencies on a regular basis. You may even want to consider a containerized system that would house all cooling and power.

Enhance the energy efficiency and lower the PUE of your data center

Ready to take a step towards reducing your data center's energy consumption? Mitsubishi Electric can help you upgrade to a more efficient UPS.

1 The Green Grid. (2007, February 20). Green Grid Metrics: Describing DataCenter Power Efficiency. Retrieved February 18, 2015, from www.thegreengrid.org: http://www.thegreengrid.org/en/Global/Content/white-papers/Green-Grid-Metrics

The TGG white paper #49, “PUE: A Comprehensive Examination of the Metric”, is an invaluable resource for any data center wanting to report and use PUE.

2 The Green Grid. (2012). PUE: A Comprehensive Examination of the Metric. Retrieved February 18, 2015, from www.thegreengrid.org: http://www.thegreengrid.org/en/Global/Content/white-papers/WP49-PUEAComprehensiveExaminationoftheMetric

uptimeinstitute. (2011, June 1). Google Data Center Efficiency Lessons that apply to all Data Centers. Retrieved February 20, 2015, from https://www.youtube.com/watch?v=0m-yRYEMZVY

Additional Sources

Sean Evanuik, EIT, Mitsubishi Electric Power Products, Inc., UPS Division, Application Engineer

U.S. Environmental Protection Agency. (2007, August 2). Report to Congress on Server and Data Center Energy Efficiency Public Law 109-431. Retrieved February 24, 2015, from www.energystar.gov: http://www.energystar.gov/ia/partners/prod_development/downloads/EPA_Datacenter_Report_Congress_Final1.pdf

ASHRAE TC 9.9. (2011). 2011 Thermal Guidelines for Data Processing Environments - Expanded Data Center Classes and Usage Guidance. Retrieved March 4, 2015